Abstract

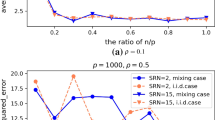

This paper considers a high-dimensional linear regression problem where there are complex correlation structures among predictors. We propose a graph-constrained regularization procedure, named Sparse Laplacian Shrinkage with the Graphical Lasso Estimator (SLS-GLE). The procedure uses the estimated precision matrix to describe the specific information on the conditional dependence pattern among predictors, and encourages both sparsity on the regression model and the graphical model. We introduce the Laplacian quadratic penalty adopting the graph information, and give detailed discussions on the advantages of using the precision matrix to construct the Laplacian matrix. Theoretical properties and numerical comparisons are presented to show that the proposed method improves both model interpretability and accuracy of estimation. We also apply this method to a financial problem and prove that the proposed procedure is successful in assets selection.

Similar content being viewed by others

References

Bickel PJ, Ritov Y, Tsybakov AB (2009) Simultaneous analysis of lasso and Dantzig selector. Ann Stat 37(4):1705–1732

Chung F (1997) Spectral graph theory. CBMS regional conference series in mathematics. American Mathematical Society

Daye ZJ, Jeng XJ (2009) Shrinkage and model selection with correlated variables via weighted fusion. Comput Stat Data Anal 53(4):1284–1298

Fan J, Peng H (2004) Nonconcave penalized likelihood with a diverging number of parameters. Ann Stat 32(3):928–961

Fan JQ, Li RZ (2001) Variable selection via nonconcave penalized likelihood and its oracle properties. J Am Stat Assoc 96(456):1348–1360

Friedman J, Hastie T, Höfling H, Tibshirani R (2007) Pathwise coordinate optimization. Ann Appl Stat 1(2):302–332

Friedman J, Hastie T, Tibshirani R (2008) Sparse inverse covariance estimation with the graphical lasso. Biostatistics 9(3):432–441

Friedman J, Hastie T, Tibshirani RJ (2010) Regularization paths for generalized linear models via coordinate descent. J Stat Softw 33(1):1–22

Guo JH, Hu JC, Jing BY, Zhang Z (2016) Spline-lasso in high-dimensional linear regression. J Am Stat Assoc 111(513):288–297

Hebiri M, van de Geer S (2011) The smooth-lasso and other \(l1+l2\)-penalized methods. Electron J Stat 5:1184–1226

Huang J, Breheny P, Lee S, Ma S, Zhang CH (2016) The mnet method for variable selection. Stat Sin 26(3):903–923

Huang J, Ma S, Li H, Zhang CH (2011) The sparse Laplacian shrinkage estimator for high-dimensional regression. Ann Stat 39(4):2021–2046

Li CY, Li HZ (2008) Network-constrained regularization and variable selection for analysis of genomic data. Bioinformatics 24(9):1175–1182

Li CY, Li HZ (2010) Variable selection and regression analysis for graph-structured covariates with an application to genomics. Ann Appl Stat 4(3):1498–1516

Li Y, Mark B, Raskutti G, Willett R (2018) Graph-based regularization for regression problems with highly-correlated designs. In: 2018 IEEE global conference on signal and information processing (GlobalSIP). IEEE, pp 740–742

Mazumder R, Friedman JH, Hastie T (2011) Sparsenet: coordinate descent with nonconvex penalties. J Am Stat Assoc 106(495):1125–1138

Meinshausen N, Yu B (2009) Lasso-type recovery of sparse representations for high-dimensional data. Ann Stat 37(1):246–270

Ravikumar P, Wainwright MJ, Raskutti G, Yu B (2011) High-dimensional covariance estimation by minimizing l1-penalized log-determinant divergence. Electron J Stat 5:935–980

She YY (2010) Sparse regression with exact clustering. Electron J Stat 4:1055–1096

Simon N, Friedman J, Hastie T, Tibshirani R (2013) A sparse-group lasso. J Comput Graph Stat 22(2):231–245

Tibshirani R (1996) Regression shrinkage and selection via the lasso. J R Stat Soc: Ser B 58(1):267–288

Tibshirani R, Saunders M, Rosset S, Zhu J, Knight K (2005) Sparsity and smoothness via the fused lasso. J R Stat Soc: Ser B 67(1):91–108

Wainwright MJ (2009) Sharp thresholds for noisy and high-dimensional recovery of sparsity using \(l_1\)-constrained quadratic programming (lasso). IEEE Trans Inf Theory 55(5):2183–2202

Wu L, Yang Y, Liu H (2014) Nonnegative-lasso and application in index tracking. Comput Stat Data Anal 70:116–126

Yang Y, Yang H (2021) Adaptive and reversed penalty for analysis of high-dimensional correlated data. Appl Math Model 92:63–77

Yuan M, Lin Y (2006) Model selection and estimation in regression with grouped variables. J R Stat Soc: Ser B 68(1):49–67

Yuan M, Lin Y (2007) Model selection and estimation in the gaussian graphical model. Biometrika 94(1):19–35

Zhang B, Horvath S (2005) A general framework for weighted gene co-expression network analysis. Stat Appl Genet Mol Biol 4(1):1–45

Zhang CH (2010) Nearly unbiased variable selection under minimax concave penalty. Ann Stat 38(2):894–942

Zhao P, Yu B (2006) On model selection consistency of lasso. J Mach Learn Res 7:2541–2563

Zou H, Hastie T (2005) Regularization and variable selection via the elastic net. J R Stat Soc: Ser B 67(2):301–320

Acknowledgements

This work was supported by the National Natural Science Foundation of China (Grant Nos. 12001557, 11671059); and the Youth Talent Development Support Program in Central University of Finance and Economics (QYP202104).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

About this article

Cite this article

Xia, S., Yang, Y. & Yang, H. Sparse Laplacian Shrinkage with the Graphical Lasso Estimator for Regression Problems. TEST 31, 255–277 (2022). https://doi.org/10.1007/s11749-021-00779-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11749-021-00779-7