Abstract

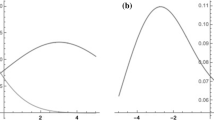

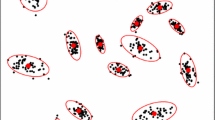

The paper investigates a mixture of expert models. The mixture of experts is a combination of experts, local approximation model, and a gate function, which weighs these experts and forms their ensemble. In this work, each expert is a linear model. The gate function is a neural network with softmax on the last layer. The paper analyzes various prior distributions for each expert. The authors propose a method that takes into account the relationship between prior distributions of different experts. The EM algorithm optimises both parameters of the local models and parameters of the gate function. As an application problem, the paper solves a problem of shape recognition on images. Each expert fits one circle in an image and recovers its parameters: the coordinates of the center and the radius. The computational experiment uses synthetic and real data to test the proposed method. The real data is a human eye image from the iris detection problem.

Similar content being viewed by others

REFERENCES

C. Tianqi and G. Carlos, “XGBoost: A scalable tree boosting system,” in Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (2016).

C. Xi and I. Hemant, “Random forests for genomic data analysis,” Genomics 99 (6), 323–329 (2012).

Y. S. Esen, J. Wilson, and P. D. Gader, “Twenty years of mixture of experts,” IEEE Trans. Neural Networks Learn. Syst. 23 (8), 1177–1193 (2012).

C. E. Rasmussen and Z. Ghahramani, “Infinite mixtures of Gaussian process experts,” in NIPS'01: Proceedings of the 14th International Conference on Neural Information Processing Systems: Natural and Synthetic (2002), pp. 881–888.

N. Shazeer, A. Mirhoseini, and K. Maziarz, “Outrageously large neural networks: The sparsely-gated mixture-of-experts layer,” International Conference on Learning Representations (2017).

M. I. Jordan, “Hierarchical mixtures of experts and the EM algorithm,” Neural Comput. 6 (2), 181–214 (1994).

M. I. Jordan and R. A. Jacobs, “Hierarchies of adaptive experts,” in NIPS'91: Proceedings of the 4th International Conference on Neural Information Processing Systems (1991), pp. 985–992.

C. Lima, A. Coelho, and F. J. Zuben, “Hybridizing mixtures of experts with support vector machines: Investigation into nonlinear dynamic systems identification,” Inf. Sci. 177 (10), 2049–2074 (2007).

L. Cao, “Support vector machines experts for time series forecasting,” Neurocomputing 51, 321–339 (2003).

M. S. Yumlu, F. S. Gurgen, and N. Okay, “Financial time series prediction using mixture of experts,” in Proceedings of the 18th International Symposium on Computer and Information Sciences (Springer, Berlin, 2003), pp. 553–560.

Y. M. Cheung, W. M. Leung, and L. Xu, “Application of mixture of experts model to financial time series forecasting,” in Proceedings of the International Conference on Neural Networks and Signal Processing (1995), pp. 1–4.

A. S. Weigend and S. Shi, “Predicting daily probability distributions of S&P500 returns,” J. Forecast. 19 (4), 375–392 (2000).

R. Ebrahimpour, M. R. Moradian, A. Esmkhani, and F. M. Jafarlou, “Recognition of Persian handwritten digits using characterization loci and mixture of experts,” J. Digital Content Technol. Appl. 3 (3), 42–46 (2009).

A. Estabrooks and N. Japkowicz, “A mixture-of-experts framework for text classification,” in Proceedings of Workshop on Computational Natural Language Learning (ACT, 2001), pp. 1–8.

S. Mossavat, O. Amft, B. Petkov Vries, and W. Kleijn, “A Bayesian hierarchical mixture of experts approach to estimate speech quality,” in Proceedings of the 2nd International Workshop on Quality of Multimedia Experience (2010), pp. 200–205.

F. Peng, R. A. Jacobs, and M. A. Tanner, “Bayesian inference in mixtures-of-experts and hierarchical mixtures-of-experts models with an application to speech recognition,” J. Am. Stat. Assoc. 91 (435), 953–960 (1996).

A. Tuerk, PhD Thesis (Cambridge Univ., Cambridge, 2001).

C. Sminchisescu, A. Kanaujia, and D. Metaxas, “Discriminative density propagation for visual tracking,” IEEE Trans. Pattern Anal. Mach. Intell. 29 (11), 2030–2044 (2007).

K. Bowyer, K. Hollingsworth, and P. Flynn, A Survey of Iris Biometrics Research: 2008–2010.

I. Matveev, “Detection of iris in image by interrelated maxima of brightness gradient projections,” Appl. Comput. Math. 9 (2), 252–257 (2010).

I. Matveev and I. Simonenko, “Detecting precise iris boundaries by circular shortest path method,” Pattern Recogn. Image Anal. 24, 304–309 (2014).

A. P. Dempster, N. M. Laird, and D. B. Rubin, “Maximum likelihood from incomplete data via the EM algorithm,” J. R. Stat. Soc. Ser. B (Methodol.) 39 (1), 1–38 (1977).

C. Bishop, Pattern Recognition and Machine Learning (Springer, Berlin, 2006).

Funding

This research was supported by the Russian Foundation for Basic Research (project nos. 19-07-01155, 19-07-0875) and by NTI (project “Mathematical methods of big data analysis” 13/1251/2018).

Author information

Authors and Affiliations

Corresponding authors

Rights and permissions

About this article

Cite this article

Grabovoy, A.V., Strijov, V.V. Prior Distribution Selection for a Mixture of Experts. Comput. Math. and Math. Phys. 61, 1140–1152 (2021). https://doi.org/10.1134/S0965542521070071

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1134/S0965542521070071