Abstract

In this paper, we consider convex quadratic optimization problems with indicator variables when the matrix Q defining the quadratic term in the objective is sparse. We use a graphical representation of the support of Q, and show that if this graph is a path, then we can solve the associated problem in polynomial time. This enables us to construct a compact extended formulation for the closure of the convex hull of the epigraph of the mixed-integer convex problem. Furthermore, motivated by inference problems with graphical models, we propose a novel decomposition method for a class of general (sparse) strictly diagonally dominant Q, which leverages the efficient algorithm for the path case. Our computational experiments demonstrate the effectiveness of the proposed method compared to state-of-the-art mixed-integer optimization solvers.

Similar content being viewed by others

Notes

They consider a slightly different term, where the sparsity is imposed via a cardinality constraint \(a^\top z\le k\) instead of a penalization in the objective.

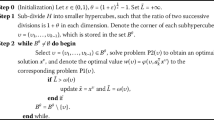

For step size \(s_k=1/k\), we modify line 6 of Algorithm 2 to \((\alpha ,\beta )\leftarrow (\alpha ,\beta )+s_k \rho (\bar{x},\bar{z})\) (without normalization), since this version performed better in our computations.

References

Aktürk, M.S., Atamtürk, A., Gürel, S.: A strong conic quadratic reformulation for machine-job assignment with controllable processing times. Oper. Res. Lett. 37, 187–191 (2009)

Anstreicher, K.M., Burer, S.: Quadratic optimization with switching variables: The convex hull for \(n= 2\). Math. Program. 188, 421–441 (2021)

Atamtürk, A., Gómez, A.: Strong formulations for quadratic optimization with M-matrices and indicator variables. Math. Program. 170, 141–176 (2018)

Atamtürk, A., Gómez, A.: Rank-one convexification for sparse regression. arXiv preprint arXiv:1901.10334 (2019)

Atamtürk, A., Gómez, A.: Safe screening rules for L0-regression from perspective relaxations. In International Conference on Machine Learning, pages 421–430. PMLR, (2020)

Atamtürk, A., Gómez, A.: Supermodularity and valid inequalities for quadratic optimization with indicators. arXiv preprint arXiv:2012.14633, (2020)

Atamtürk, A., Gómez, A., Han, S.: Sparse and smooth signal estimation: Convexification of L0-formulations. J. Mach. Learn. Res. 22(52), 1–43 (2021)

Bertsekas, D.P.: Local convex conjugacy and Fenchel duality. IFAC Proceedings Volumes 11(1), 1079–1084 (1978)

Bertsimas, D., King, A., Mazumder, R.: Best subset selection via a modern optimization lens. Ann. Stat. 44, 813–852 (2016)

Besag, J.: Spatial interaction and the statistical analysis of lattice systems. J. Roy. Stat. Soc.: Ser. B (Methodol.) 36(2), 192–225 (1974)

Besag, J., Kooperberg, C.: On conditional and intrinsic autoregressions. Biometrika 82(4), 733–746 (1995)

Besag, J., York, J., Mollié, A.: Bayesian image restoration, with two applications in spatial statistics. Ann. Inst. Stat. Math. 43(1), 1–20 (1991)

Bienstock, D.: Computational study of a family of mixed-integer quadratic programming problems. Math. Program. 74(2), 121–140 (1996)

Boyd, S., Boyd, S.P., Vandenberghe, L.: Convex optimization. Cambridge University Press, Cambridge (2004)

Boyd, S., Xiao, L., Mutapcic, A.: Subgradient methods. Lecture notes of EE392o, Stanford University, Autumn Quarter, 2004:2004–2005, (2003)

Ceria, S., Soares, J.: Convex programming for disjunctive convex optimization. Math. Program. 86, 595–614 (1999)

Chen, Y., Ge, D., Wang, M., Wang, Z., Ye, Y., Yin, H.: Strong np-hardness for sparse optimization with concave penalty functions. In International Conference on Machine Learning, pages 740–747. PMLR (2017)

Cozad, A., Sahinidis, N.V., Miller, D.C.: Learning surrogate models for simulation-based optimization. AIChE J. 60(6), 2211–2227 (2014)

Das, A., Kempe, D.: Algorithms for subset selection in linear regression. In Proceedings of the Fortieth Annual ACM Symposium on Theory of Computing, pages 45–54, (2008)

Datta, B.N.: Numerical linear algebra and applications, vol. 116. SIAM, Philadelphia (2010)

Davarnia, D., Van Hoeve, W.-J.: Outer approximation for integer nonlinear programs via decision diagrams. Math. Program. 187(1), 111–150 (2021)

Del Pia, A., Dey, S.S., Weismantel, R.: Subset selection in sparse matrices. SIAM J. Optim. 30(2), 1173–1190 (2020)

Eppen, G., Martin, R.: Solving multi-item capacitated lot-sizing problems with variable definition. Oper. Res. 35(6), 832–848 (1987)

Fang, E.X., Liu, H., Wang, M.: Blessing of massive scale: spatial graphical model estimation with a total cardinality constraint approach. Math. Program. 176(1), 175–205 (2019)

Fattahi, S., Gómez, A.: Scalable inference of sparsely-changing Markov random fields with strong statistical guarantees. Forthcoming in NeurIPS, (2021)

Frangioni, A., Furini, F., Gentile, C.: Improving the approximated projected perspective reformulation by dual information. Oper. Res. Lett. 45, 519–524 (2017)

Frangioni, A., Gentile, C.: Perspective cuts for a class of convex 0–1 mixed integer programs. Math. Program. 106, 225–236 (2006)

Frangioni, A., Gentile, C., Hungerford, J.: Decompositions of semidefinite matrices and the perspective reformulation of nonseparable quadratic programs. Math. Oper. Res. 45(1), 15–33 (2020)

Gade, D., Küçükyavuz, S.: Formulations for dynamic lot sizing with service levels. Nav. Res. Logist. 60(2), 87–101 (2013)

Garey, M.R., Johnson, D.S.: Computers and intractability, vol. 174. freeman, San Francisco (1979)

Geman, S., Graffigne, C.: Markov random field image models and their applications to computer vision. In: Proceedings of the International Congress of Mathematicians, vol. 1, page 2. Berkeley, CA, (1986)

Gómez, A.: Outlier detection in time series via mixed-integer conic quadratic optimization. SIAM J. Optim. 31(3), 1897–1925 (2021)

Günlük, O., Linderoth, J.: Perspective reformulations of mixed integer nonlinear programs with indicator variables. Math. Program. 124, 183–205 (2010)

Han, S., Gómez, A., Atamtürk, A.: 2x2 convexifications for convex quadratic optimization with indicator variables. arXiv preprint arXiv:2004.07448, (2020)

Hazimeh, H., Mazumder, R., Saab, A.: Sparse regression at scale: Branch-and-bound rooted in first-order optimization. Mathematical Programming, 2021. Article in Advance, https://doi.org/10.1007/s10107-021-01712-4

He, Z., Han, S., Gómez, A., Cui, Y., Pang, J.-S.: Comparing solution paths of sparse quadratic minimization with a Stieltjes matrix. Optimization Online: http://www.optimization-online.org/DB_HTML/2021/09/8608.html, (2021)

Hochbaum, D.S.: An efficient algorithm for image segmentation, Markov random fields and related problems. Journal of the ACM (JACM) 48(4), 686–701 (2001)

Jeon, H., Linderoth, J., Miller, A.: Quadratic cone cutting surfaces for quadratic programs with on-off constraints. Discret. Optim. 24, 32–50 (2017)

Kruskal, J.B.: On the shortest spanning subtree of a graph and the traveling salesman problem. Proceedings of the American Mathematical Society 7(1), 48–50 (1956)

Küçükyavuz, S., Shojaie, A., Manzour, H., Wei, L.: Consistent second-order conic integer programming for learning Bayesian networks. arXiv preprint arXiv:2005.14346, (2020)

Lozano, L., Bergman, D., Smith, J.C.: On the consistent path problem. Operations Resesarch 68(6), 1913–1931 (2020)

Magnanti, T.L., Wolsey, L.A.: Optimal trees. Handbooks Oper. Res. Management Sci. 7, 503–615 (1995)

Manzour, H., Küçükyavuz, S., Wu, H.-H., Shojaie, A.: Integer programming for learning directed acyclic graphs from continuous data. INFORMS Journal on Optimization 3(1), 46–73 (2021)

Mao, X., Qiu, K., Li, T., Gu, Y.: Spatio-temporal signal recovery based on low rank and differential smoothness. IEEE Trans. Signal Process. 66(23), 6281–6296 (2018)

Nesterov, Y.: Primal-dual subgradient methods for convex problems. Math. Program. 120(1), 221–259 (2009)

Nesterov, Y.E.: A method for solving the convex programming problem with convergence rate \(O(1/k^2)\). In Doklady Akademii Nauk SSSR 269, 543–547 (1983)

Richard, J.-P.P., Tawarmalani, M.: Lifting inequalities: a framework for generating strong cuts for nonlinear programs. Math. Program. 121, 61–104 (2010)

Saquib, S.S., Bouman, C.A., Sauer, K.: ML parameter estimation for Markov random fields with applications to Bayesian tomography. IEEE Trans. Image Process. 7(7), 1029–1044 (1998)

Sion, M.: On general minimax theorems. Pac. J. Math. 8(1), 171–176 (1958)

Tutte, W.T.: A short proof of the factor theorem for finite graphs. Can. J. Math. 6, 347–352 (1954)

Wei, L., Gómez, A., Küçükyavuz, S.: Ideal formulations for constrained convex optimization problems with indicator variables. Mathematical Programmming 192(1–2), 57–88 (2022)

Wei, L., Gómez, A., Küçükyavuz, S.: On the convexification of constrained quadratic optimization problems with indicator variables. In International Conference on Integer Programming and Combinatorial Optimization, pages 433–447. Springer, (2020)

Wolsey, L.A.: Solving multi-item lot-sizing problems with an MIP solver using classification and reformulation. 48(12), 1587–1602, (2002)

Wolsey, L.A.: Integer programming. John Wiley & Sons, Newyork (2020)

Wolsey, L.A., Nemhauser, G.L.: Integer and combinatorial optimization. John Wiley & Sons, Newyork (1999)

Wu, H., Noé, F.: Maximum a posteriori estimation for Markov chains based on gaussian Markov random fields. Procedia Computer Science 1(1), 1665–1673 (2010)

Xie, W., Deng, X.: Scalable algorithms for the sparse ridge regression. SIAM J. Optim. 30, 3359–3386 (2020)

Ziniel, J., Potter, L.C., Schniter, P.: Tracking and smoothing of time-varying sparse signals via approximate belief propagation. In: 2010 Conference Record of the Forty Fourth Asilomar Conference on Signals, Systems and Computers, pages 808–812. IEEE, (2010)

Acknowledgements

We thank the AE and the referees whose comments improved this paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This research is supported, in part, by NSF grants 2006762, 2007814, 2152776, and ONR grant N00014-22-1-2127.

Rights and permissions

About this article

Cite this article

Liu, P., Fattahi, S., Gómez, A. et al. A graph-based decomposition method for convex quadratic optimization with indicators. Math. Program. 200, 669–701 (2023). https://doi.org/10.1007/s10107-022-01845-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-022-01845-0

Keywords

- Quadratic optimization

- Indicator variables

- Sparsity

- Decomposition

- Graphical models

- Fenchel dual

- Convex hull