Abstract

In the recent era, graph neural networks are widely used on vision-to-language tasks and achieved promising results. In particular, graph convolution network (GCN) is capable of capturing spatial and semantic relationships needed for visual question answering (VQA). But, applying GCN on VQA datasets with different subtasks can lead to varying results. Also, the training and testing size, evaluation metrics and hyperparameter used are other factors that affect VQA results. These, factors can be subjected into similar evaluation schemes in order to obtain fair evaluations of GCN based result for VQA. This study proposed a GCN framework for VQA based on fine tune word representation to solve handle reasoning type questions. The framework performance is evaluated using various performance measures. The results obtained from GQA and VQA 2.0 datasets slightly outperform most existing methods.

Similar content being viewed by others

References

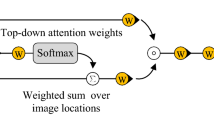

Anderson P, He X, Buehler C, Teney D, Johnson M, Gould S, Zhang L (2018) Bottom-up and top-down attention for image captioning and visual question answering. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp. 6077-6086

Antol S, Agrawal A, Lu J, Mitchell M, Batra D, Zitnick CL, Parikh D (2015) Vqa: visual question answering. In: Proceedings of the IEEE international conference on computer vision. pp. 2425-2433

Baltrušaitis T, Ahuja C, Morency LP (2018) Multimodal machine learning: a survey and taxonomy. IEEE Trans Pattern Anal Mach Intell 41(2):423–443

Cadene R, Ben-Younes H, Cord M, Thome N (2019) Murel: multimodal relational reasoning for visual question answering. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 1989-1998

Cho K, van Merriënboer B, Bahdanau D, Bengio Y (2014, October) On the properties of neural machine translation: encoder–decoder approaches. In: Proceedings of SSST-8, eighth workshop on syntax, semantics and structure in statistical translation. pp. 103-111

Gupta D, Suman S, Ekbal A (2021) Hierarchical deep multi-modal network for medical visual question answering. Expert Syst Appl 164:113993

Gurari D, Li Q, Stangl AJ, Guo A, Lin C, Grauman K, ..., Bigham JP (2018) Vizwiz grand challenge: Answering visual questions from blind people. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 3608–3617

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp. 770-778

He B, Xia M, Yu X, Jian P, Meng H, Chen Z (2017, December) An educational robot system of visual question answering for preschoolers. In: 2017 2nd international conference on robotics and automation engineering (ICRAE). IEEE. pp. 441-445

Hildebrandt M, Li H, Koner R, Tresp V, Günnemann S (2020) Scene graph reasoning for visual question answering. arXiv preprint arXiv:2007.01072

Hu Z, Wei J, Huang Q, Liang H, Zhang X, Liu Q (2020, July) Graph convolutional network for visual question answering based on fine-grained question representation. In: 2020 IEEE fifth international conference on data science in cyberspace (DSC). IEEE. pp. 218-224

Hudson DA, Manning CD (2019) Gqa: a new dataset for real-world visual reasoning and compositional question answering. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. pp. 6700-6709

Johnson J, Hariharan B, Van Der Maaten L, Fei-Fei L, Lawrence Zitnick C, Girshick R (2017) Clevr: a diagnostic dataset for compositional language and elementary visual reasoning. In: proceedings of the IEEE conference on computer vision and pattern recognition. pp. 2901-2910

Kipf TN, Welling M (2017) Semi-supervised classification with graph convolutional networks. arXiv preprint arXiv:1609.02907. ICLR 2017

Krizhevsky A, Sutskever I, Hinton GE (2012) Imagenet classification with deep convolutional neural networks. Adv Neural Inf Proces Syst 25:1097–1105

Li D, Zhang Z, Chen X, Huang K (2018) A richly annotated pedestrian dataset for person retrieval in real surveillance scenarios. IEEE Trans Image Process 28(4):1575–1590

Narasimhan M, Lazebnik S, Schwing AG (2018, December) Out of the box: reasoning with graph convolution nets for factual visual question answering. In: Proceedings of the 32nd international conference on neural information processing systems. pp. 2659-2670

Norcliffe-Brown W, Vafeias E, Parisot S (2018, December) Learning conditioned graph structures for interpretable visual question answering. In: Proceedings of the 32nd international conference on neural information processing systems. pp. 8344-8353

Pennington J, Socher R, Manning CD (2014, October) Glove: global vectors for word representation. In proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP). pp. 1532-1543

Probst P, Boulesteix AL, Bischl B (2019) Tunability: importance of hyperparameters of machine learning algorithms. J Mach Learn Res 20(1):1934–1965

Simonyan K, Zisserman A (2014) Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556

Singh AK, Mishra A, Shekhar S, Chakraborty A (2019) From strings to things: knowledge-enabled VQA model that can read and reason. In: Proceedings of the IEEE/CVF international conference on computer vision. pp. 4602-4612

Sundermeyer M, Schlüter R, Ney H (2012) LSTM neural networks for language modeling. In: Thirteenth annual conference of the international speech communication association

Teney D, Liu L, van Den Hengel A (2017) Graph-structured representations for visual question answering. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp. 1-9

Trott A, Xiong C, Socher R (2018, February) Interpretable Counting for Visual Question Answering. In: International Conference on Learning Representations

Wang X, Ye Y, Gupta A (2018) Zero-shot recognition via semantic embeddings and knowledge graphs. In: Proceedings of the IEEE conference on computer vision and pattern recognition. pp. 6857-6866

Wu Z, Pan S, Chen F, Long G, Zhang C, Philip SY (2020) A comprehensive survey on graph neural networks. IEEE transactions on neural networks and learning systems

Xu X, Wang T, Yang Y, Hanjalic A, Shen HT (2020) Radial graph convolutional network for visual question generation. IEEE transactions on neural networks and learning systems

Yang J, Lu J, Lee S, Batra D, Parikh D (2018a) Graph r-cnn for scene graph generation. In: Proceedings of the European conference on computer vision (ECCV). pp. 670-685

Yang Z, Yu J, Yang C, Qin Z, Hu Y (2018b) Multi-modal learning with prior visual relation reasoning. arXiv preprint arXiv:1812.09681, 3(7)

Yang Z, Qin Z, Yu J, Hu Y (2019) Scene graph reasoning with prior visual relationship for visual question answering. arXiv preprint arXiv:1812.09681

Yao T, Pan Y, Li Y, Mei T (2018) Exploring visual relationship for image captioning. In: Proceedings of the European conference on computer vision (ECCV). pp. 684-699

Yu J, Lu Y, Qin Z, Zhang W, Liu Y, Tan J, Guo L (2018, September) Modeling text with graph convolutional network for cross-modal information retrieval. In: Pacific rim conference on multimedia. Springer, Cham. pp. 223-234

Zhang Y, Hare J, Prügel-Bennett A (2018, February). Learning to Count Objects in Natural Images for Visual Question Answering. In: International Conference on Learning Representations

Zhou X, Shen F, Liu L, Liu W, Nie L, Yang Y, Shen HT (2020) Graph convolutional network hashing. IEEE Trans Cybern 50(4):1460–1472

Zhu X, Mao Z, Chen Z, Li Y, Wang Z, Wang B (2020) Object-difference derived graph convolutional networks for visual question answering. Multimed Tools Appl, 1-19 80:16247–16265

Funding

This work is supported by the National Key R&D Program of China No. 2017YFB1002101 and the Joint Advanced Research Foundation of China Electronics Technology Group Corporation No. 6141B08010102.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yusuf, A.A., Chong, F. & Xianling, M. Evaluation of graph convolutional networks performance for visual question answering on reasoning datasets. Multimed Tools Appl 81, 40361–40370 (2022). https://doi.org/10.1007/s11042-022-13065-x

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-022-13065-x