Abstract

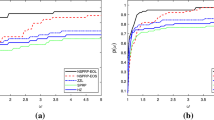

Positive basis is an important concept in direct search methods. Although any positive basis can ensure the convergence in theory, the maximum positive bases are often used to construct direct search algorithms. In this paper, two direct search methods for computational expensive functions are proposed based on the minimal positive bases. The Coope–Price’s frame-based direct search framework is employed to insure convergence. PRP+ method and a recently developed descent conjugate gradient method are employed respectively to accelerate convergence. The data profiles and the performance profiles of the numerical experiments show that the proposed methods are effective for computational expensive functions.

Similar content being viewed by others

References

Abraham, M.A., Audet, C., Dennis, J.E.: Nonlinear programming with mesh adaptive direct searches. SIAG/Optimization Views-and-News 17, 2–11 (2006)

Audet, C., Dennis Jr., J.E.: Analysis of generalized pattern searches. SIAM J. Optim. 13, 889–903 (2003)

Audet, C., Dennis Jr., J.E.: Mesh adaptive direct search algorithms for constrained optimization. SIAM J. Optim. 17, 188–217 (2006)

Conn, A., Scheinberg, K., Toint, P.L.: On the convergence of derivative-free methods for unconstrained optimization. In: Buchmann, M.D., Iserles, A. (eds.) Approximation Theory and Optimization. Cambridge University Press, Cambridge, England (1997)

Coope, I.D., Price, C.J.: Frame based methods for unconstrained optimization. J. Optim. Theory Appl. 107, 261–274 (2000)

Coope, I.D., Price, C.J.: On the convergence of grid-based methods for unconstrained optimization. SIAM J. Optim. 11, 859–869 (2001)

Coope, I.D., Price, C.J.: Positive bases in numerical optimization. Comput. Optim. Appl. 21, 169–176 (2002)

Coope, I.D., Price, C.J.: A direct search frame-based conjugate gradients method. J. Comput. Math. 22, 489–500 (2004)

Custodio, A.L., Vicente, L.N.: Using sampling and simplex derivatives in pattern search methods. SIAM J. Optim. 18, 537–555 (2007)

Custodio, A.L., Dennis, J.E., Vicente, L.N.: Using simplex gradients of nonsmooth functions in direct search methods. IMA J. Numer. Anal 28(4), 770–784 (2008)

Custodio, A.L., Rocha, H., Vicente, L.N.: Incorporating minimum Frobenius norm models in direct search. Comput. Optim. Appl. 46, 265–278 (2010)

CUTEr: A constrained and unconstrained testing enviroment. Revisited. Website. http://cuter.rl.ac.uk/cuter-www/

Davidon, W.C.: Variable metric method for minimization. SIAM J. Optim. 1, 1–17 (1992)

Davis, C.: Theory of positive linear dependence. Am. J. Math. 76, 733–746 (1954)

Dolan, E.D., Lewis, R.M., Torczon, V.: On the local convergence of pattern search. SIAM J. Optim. 14, 567–583 (2003)

Dolan, E.D., More, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91, 201–213 (2002)

Hooke, R., Jeeves, T.A.: Direct search solution of numerical and statistical problems. J. ACM 8, 212–229 (1961)

Karasozen, B.: Survey of trust-region derivative free optimization methods. J. Ind. Manag. Optim. 3, 321–334 (2007)

Kolda, T.G., Lewis, R.M., Torczon, V.: Optimization by direct search: new perspectives on some classical and modern methods. SIAM Rev. 45, 385–482 (2003)

Kolda, T.G., Lewis, R.M., Torczon, V.: Stationarity results for generating set search for linearly constrained optimization. SIAM J. Optim 17, 943–968 (2006)

Lewis, R.M., Torczon, V.: Rank ordering and positive bases in pattern search algorithms. Tech. REp. 96-71, Institute for Computer Applications in Science and Engineering, NASA Langley Research Center, Hampson, VA (1996)

Lewis, R.M., Torczon, V.: Pattern search algorithms for bound constrained minimization. SIAM J. Optim. 9, 1082–1099 (1999)

Lewis, R.M., Torczon, V.: Pattern search methods for linearly constrained minimization. SIAM J. Optim. 10, 917–941 (2000)

Lewis, R.M., Shepherd, A., Torczon, V.: Implementing generating set search methods for linearly constrained minimization. SIAM J. Sci. Comput. 29, 2507–2530 (2007)

Macklem, M.: Low-dimensional curvature methods in derivative-free optimization on shared computing networks. PhD thesis, Dalhousie University (2009)

More, J.J., Garbow, B.S., Hillstrom, K.E.: Testing unconstrained optimization software. ACM Trans. Math. Softw. 7(1), 17–41 (1981)

More, J.J., Wild, S.M.: Benchmarking derivative-free optimization algorithms. SIAM J. Optim. 20, 172–191 (2009)

Gilbert, J.C., Nocedal, J.: Global convergence properties of conjugate gradient methods for optimization. SIAM J. Optim. 2, 21–42 (1992)

Powell, M.J.D.: Direct search algorithms for optimization calculations. Acta Numer 7, 287–336 (1998)

Powell, M.J.D., A view of algorithms for optimization without derivatives. DAMTP 2007/NA03.

Torczon, V.: On the convergence of the multidirectional search algorithm. SIAM J. Optim. 1, 123–145 (1991)

Torczon, V.: On the convergence of pattern search algorithms. SIAM J. Optim. 7, 1–25 (1997)

Zhang, L., Zou, W.J., Li, D.H.: A descent modified Polad-Ribiere-Polyak conjugate gradient method and its global convergence. IMA J. Numer. Anal. 26, 629–640 (2006)

Author information

Authors and Affiliations

Corresponding author

Additional information

Supported by the NNSF of China Grant 11071087 and 10971058.

Rights and permissions

About this article

Cite this article

Liu, Q. Two minimal positive bases based direct search conjugate gradient methods for computationally expensive functions. Numer Algor 58, 461–474 (2011). https://doi.org/10.1007/s11075-011-9464-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11075-011-9464-7