Abstract

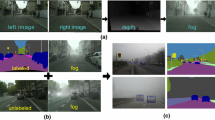

This work addresses the problem of semantic scene understanding under fog. Although marked progress has been made in semantic scene understanding, it is mainly concentrated on clear-weather scenes. Extending semantic segmentation methods to adverse weather conditions such as fog is crucial for outdoor applications. In this paper, we propose a novel method, named Curriculum Model Adaptation (CMAda), which gradually adapts a semantic segmentation model from light synthetic fog to dense real fog in multiple steps, using both labeled synthetic foggy data and unlabeled real foggy data. The method is based on the fact that the results of semantic segmentation in moderately adverse conditions (light fog) can be bootstrapped to solve the same problem in highly adverse conditions (dense fog). CMAda is extensible to other adverse conditions and provides a new paradigm for learning with synthetic data and unlabeled real data. In addition, we present four other main stand-alone contributions: (1) a novel method to add synthetic fog to real, clear-weather scenes using semantic input; (2) a new fog density estimator; (3) a novel fog densification method for real foggy scenes without known depth; and (4) the Foggy Zurich dataset comprising 3808 real foggy images, with pixel-level semantic annotations for 40 images with dense fog. Our experiments show that (1) our fog simulation and fog density estimator outperform their state-of-the-art counterparts with respect to the task of semantic foggy scene understanding (SFSU); (2) CMAda improves the performance of state-of-the-art models for SFSU significantly, benefiting both from our synthetic and real foggy data. The foggy datasets and code are publicly available.

Similar content being viewed by others

Notes

Creating fine pixel-level annotations for dense foggy scenes is very difficult.

References

Achanta, R., Shaji, A., Smith, K., Lucchi, A., Fua, P., & Süsstrunk, S. (2012). SLIC superpixels compared to state-of-the-art superpixel methods. IEEE Transactions on Pattern Analysis and Machine Intelligence, 34(11), 2274–2282.

Alvarez, J. M., Gevers, T., LeCun, Y., & Lopez, A. M .(2012). Road scene segmentation from a single image. In European Conference on Computer Vision.

Bar Hillel, A., Lerner, R., Levi, D., & Raz, G. (2014). Recent progress in road and lane detection: A survey. Machine Vision and Applications, 25(3), 727–745.

Bengio, Y., Louradour, J., Collobert, R., & Weston, J. (2009). Curriculum learning. In International conference on machine learning (pp. 41–48).

Berman, D., Treibitz, T., & Avidan, S. (2016). Non-local image dehazing. In IEEE conference on computer vision and pattern recognition (CVPR).

Bronte, S., Bergasa, L. M., & Alcantarilla, P. F. (2009). Fog detection system based on computer vision techniques. In International IEEE conference on intelligent transportation systems.

Brostow, G. J., Shotton, J., Fauqueur, J., & Cipolla, R. (2008). Segmentation and recognition using structure from motion point clouds. In European conference on computer vision.

Buciluǎ, C., Caruana, R., & Niculescu-Mizil, A. (2006). Model compression. In International conference on knowledge discovery and data mining (SIGKDD).

Chen, Y., Li, W., Sakaridis, C., Dai, D., & Van Gool, L. (2018). Domain adaptive faster r-CNN for object detection in the wild. In Conference on computer vision and pattern recognition (CVPR).

Choi, L. K., You, J., & Bovik, A. C. (2015). Referenceless prediction of perceptual fog density and perceptual image defogging. IEEE Transactions on Image Processing, 24(11), 3888–3901.

Cordts, M., Omran, M., Ramos, S., Rehfeld, T., Enzweiler, M., Benenson, R., Franke, U., Roth, S., & Schiele, B. (2016). The Cityscapes dataset for semantic urban scene understanding. In IEEE conference on computer vision and pattern recognition (CVPR).

Dai, D., Kroeger, T., Timofte, R., & Van Gool, L. (2015). Metric imitation by manifold transfer for efficient vision applications. In IEEE conference on computer vision and pattern recognition (CVPR).

Dai, D., & Van Gool, L. (2013). Ensemble projection for semi-supervised image classification. In International conference on computer vision (ICCV).

Dai, D., & Van Gool, L. (2018). Dark model adaptation: Semantic image segmentation from daytime to nighttime. In IEEE international conference on intelligent transportation systems.

Dhall, A., Dai, D., & Van Gool, L. (2019). Real-time 3D traffic cone detection for autonomous driving. In IEEE intelligent vehicles symposium (IV).

Eisemann, E., & Durand, F. (2004). Flash photography enhancement via intrinsic relighting. In ACM SIGGRAPH.

Everingham, M., Van Gool, L., Williams, C. K., Winn, J., & Zisserman, A. (2010). The PASCAL visual object classes (VOC) challenge. IJCV, 88(2), 303–338.

Fattal, R. (2008). Single image dehazing. ACM transactions on graphics (TOG), 27(3), 1.

Fattal, R. (2014). Dehazing using color-lines. ACM transactions on graphics (TOG), 34(1), 13.

Federal Meteorological Handbook No. 1: Surface Weather Observations and Reports. (2005). U.S. Department of Commerce, National Oceanic and Atmospheric Administration.

Gallen, R., Cord, A., Hautière, N., & Aubert, D. (2011). Towards night fog detection through use of in-vehicle multipurpose cameras. In IEEE intelligent vehicles symposium (IV).

Gallen, R., Cord, A., Hautière, N., Dumont, É., & Aubert, D. (2015). Nighttime visibility analysis and estimation method in the presence of dense fog. IEEE Transactions on Intelligent Transportation Systems, 16(1), 310–320.

Garg, K., & Nayar, S. K. (2007). Vision and rain. International Journal of Computer Vision, 75(1), 3–27.

Geiger, A., Lenz, P., & Urtasun, R. (2012) Are we ready for autonomous driving? The KITTI vision benchmark suite. In IEEE conference on computer vision and pattern recognition (CVPR).

Girshick, R. (2015). Fast R-CNN. In International conference on computer vision (ICCV).

Gupta, S., Hoffman, J., & Malik, J. (2016). Cross modal distillation for supervision transfer. In The IEEE conference on computer vision and pattern recognition (CVPR)

Hautière, N., Tarel, J. P., Lavenant, J., & Aubert, D. (2006). Automatic fog detection and estimation of visibility distance through use of an onboard camera. Machine Vision and Applications, 17(1), 8–20.

He, K., Sun, J., & Tang, X. (2011). Single image haze removal using dark channel prior. IEEE Transactions on Pattern Analysis and Machine Intelligence, 33(12), 2341–2353.

He, K., Sun, J., & Tang, X. (2013). Guided image filtering. IEEE Transactions on Pattern Analysis and Machine Intelligence, 35(6), 1397–1409.

Hecker, S., Dai, D., & Van Gool, L. (2018). End-to-end learning of driving models with surround-view cameras and route planners. In European conference on computer vision (ECCV).

Hinton, G., Vinyals, O., & Dean, J. (2015). Distilling the knowledge in a neural network. arXiv preprint arXiv:1503.02531.

Hoffman, J., Guadarrama, S., Tzeng, E., Hu, R., Donahue, J., Girshick, R., Darrell, T., & Saenko, K. (2014). LSDA: Large scale detection through adaptation. In Neural information processing systems (NIPS).

Hoffman, J., Tzeng, E., Park, T., Zhu, J.Y., Isola, P., Saenko, K., Efros, A., & Darrell, T. (2018). CyCADA: Cycle-consistent adversarial domain adaptation. In International conference on machine learning.

Jensen, M. B., Philipsen, M. P., Møgelmose, A., Moeslund, T. B., & Trivedi, M. M. (2016). Vision for looking at traffic lights: Issues, survey, and perspectives. IEEE Transactions on Intelligent Transportation Systems, 17(7), 1800–1815.

Kopf, J., Cohen, M. F., Lischinski, D., & Uyttendaele, M. (2007). Joint bilateral upsampling. ACM transactions on graphics, 26, 3.

Koschmieder, H. (1924). Theorie der horizontalen Sichtweite. Beitrage zur Physik der freien Atmosphäre.

Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). ImageNet classification with deep convolutional neural networks. In NIPS.

Levinkov, E., & Fritz, M. (2013). Sequential bayesian model update under structured scene prior for semantic road scenes labeling. In IEEE international conference on computer vision.

Li, Y., You, S., Brown, M. S., & Tan, R. T. (2016). Haze visibility enhancement: A survey and quantitative benchmarking. In CoRR. arXiv:1607.06235.

Lin, G., Milan, A., Shen, C., & Reid, I. (2017). Refinenet: Multi-path refinement networks with identity mappings for high-resolution semantic segmentation. In IEEE conference on computer vision and pattern recognition (CVPR).

Ling, Z., Fan, G., Wang, Y., & Lu, X. (2016). Learning deep transmission network for single image dehazing. In IEEE international conference on image processing (ICIP).

Miclea, R. C., & Silea, I. (2015). Visibility detection in foggy environment. In International Conference on Control Systems and Computer Science.

Misra, I., Shrivastava, A., & Hebert, M. (2015). Watch and learn: Semi-supervised learning for object detectors from video. In The IEEE conference on computer vision and pattern recognition (CVPR).

Narasimhan, S. G., & Nayar, S. K. (2002). Vision and the atmosphere. International Journal of Computer Vision, 48(3), 233–254.

Narasimhan, S. G., & Nayar, S. K. (2003). Contrast restoration of weather degraded images. IEEE Transactions on Pattern Analysis and Machine Intelligence, 25(6), 713–724.

Negru, M., Nedevschi, S., & Peter, R. I. (2015). Exponential contrast restoration in fog conditions for driving assistance. IEEE Transactions on Intelligent Transportation Systems, 16(4), 2257–2268.

Neuhold, G., Ollmann, T., Rota Bulò, S., & Kontschieder, P. (2017). The Mapillary Vistas dataset for semantic understanding of street scenes. In The IEEE international conference on computer vision (ICCV).

Nishino, K., Kratz, L., & Lombardi, S. (2012). Bayesian defogging. International Journal of Computer Vision, 98(3), 263–278.

Paris, S., & Durand, F. (2009). A fast approximation of the bilateral filter using a signal processing approach. International Journal of Computer Vision, 81, 24.

Pavlić, M., Belzner, H., Rigoll, G., & Ilić, S. (2012). Image based fog detection in vehicles. In IEEE intelligent vehicles symposium.

Pavlić, M., Rigoll, G., & Ilić, S. (2013). Classification of images in fog and fog-free scenes for use in vehicles. In IEEE intelligent vehicles symposium (IV).

Petschnigg, G., Szeliski, R., Agrawala, M., Cohen, M., Hoppe, H., & Toyama, K. (2004). Digital photography with flash and no-flash image pairs. In ACM SIGGRAPH.

Radosavovic, I., Dollár, P., Girshick, R., Gkioxari, G., & He, K. (2018). Data distillation: Towards omni-supervised learning. In The IEEE conference on computer vision and pattern recognition (CVPR).

Ren, S., He, K., Girshick, R., & Sun, J. (2015). Faster R-CNN: Towards real-time object detection with region proposal networks. Advances in Neural Information Processing Systems, 4, 91–99.

Ren, W., Liu, S., Zhang, H., Pan, J., Cao, X., & Yang, M.H. (2016). Single image dehazing via multi-scale convolutional neural networks. In European conference on computer vision.

Ros, G., Sellart, L., Materzynska, J., Vazquez, D., & Lopez, A. M. (2016). The SYNTHIA dataset: A large collection of synthetic images for semantic segmentation of urban scenes. In The IEEE conference on computer vision and pattern recognition (CVPR).

Russakovsky, O., Deng, J., Su, H., Krause, J., Satheesh, S., Ma, S., et al. (2015). Imagenet large scale visual recognition challenge. International Journal of Computer Vision, 115(3), 211–252.

Sakaridis, C., Dai, D., Hecker, S., & Van Gool, L. (2018). Model adaptation with synthetic and real data for semantic dense foggy scene understanding. In European conference on computer vision (ECCV).

Sakaridis, C., Dai, D., & Van Gool, L. (2018). Semantic foggy scene understanding with synthetic data. International Journal of Computer Vision, 126(9), 973–992.

Sankaranarayanan, S., Balaji, Y., Jain, A., Nam Lim, S., & Chellappa, R. (2018). Learning from synthetic data: Addressing domain shift for semantic segmentation. In IEEE conference on computer vision and pattern recognition (CVPR).

Shen, X., Zhou, C., Xu, L., & Jia, J. (2015). Mutual-structure for joint filtering. In The IEEE international conference on computer vision (ICCV).

Shrivastava, A., Pfister, T., Tuzel, O., Susskind, J., Wang, W., & Webb, R. (2017). Learning from simulated and unsupervised images through adversarial training. In IEEE conference on computer vision and pattern recognition (CVPR).

Spinneker, R., Koch, C., Park, S. B., & Yoon, J. J. (2014). Fast fog detection for camera based advanced driver assistance systems. In International IEEE conference on intelligent transportation systems (ITSC).

Tan, R. T. (2008). Visibility in bad weather from a single image. In IEEE conference on computer vision and pattern recognition (CVPR).

Tang, K., Yang, J., & Wang, J. (2014). Investigating haze-relevant features in a learning framework for image dehazing. In IEEE conference on computer vision and pattern recognition.

Tarel, J. P., Hautière, N., Caraffa, L., Cord, A., Halmaoui, H., & Gruyer, D. (2012). Vision enhancement in homogeneous and heterogeneous fog. IEEE Intelligent Transportation Systems Magazine, 4(2), 6–20.

Tarel, J. P., Hautière, N., Cord, A., Gruyer, D., & Halmaoui, H. (2010) Improved visibility of road scene images under heterogeneous fog. In IEEE intelligent vehicles symposium (pp. 478–485).

Tsai, Y. H., Hung, W. C., Schulter, S., Sohn, K., Yang, M. H., & Chandraker, M. (2018). Learning to adapt structured output space for semantic segmentation. In IEEE conference on computer vision and pattern recognition (CVPR).

Wang, Y. K., & Fan, C. T. (2014). Single image defogging by multiscale depth fusion. IEEE Transactions on Image Processing, 23(11), 4826–4837.

Wulfmeier, M., Bewley, A., & Posner, I. (2018). Incremental adversarial domain adaptation for continually changing environments. In International conference on robotics and automation (ICRA).

Xu, Y., Wen, J., Fei, L., & Zhang, Z. (2016). Review of video and image defogging algorithms and related studies on image restoration and enhancement. IEEE Access, 4, 165–188.

Yu, F., & Koltun, V. (2016). Multi-scale context aggregation by dilated convolutions. In International conference on learning representations.

Zhang, H., Sindagi, V. A., & Patel, V. M. (2017). Joint transmission map estimation and dehazing using deep networks. In CoRR. arXiv:1708.00581.

Zhang, Y., David, P., & Gong, B. (2017). Curriculum domain adaptation for semantic segmentation of urban scenes. In ICCV.

Zhao, H., Shi, J., Qi, X., Wang, X., & Jia, J. (2017). Pyramid scene parsing network. In IEEE conference on computer vision and pattern recognition (CVPR).

Acknowledgements

This work is funded by Toyota Motor Europe via the research project TRACE-Zürich.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Anelia Angelova, Gustavo Carneiro, Niko Sünderhauf, Jürgen Leitner.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Dai, D., Sakaridis, C., Hecker, S. et al. Curriculum Model Adaptation with Synthetic and Real Data for Semantic Foggy Scene Understanding. Int J Comput Vis 128, 1182–1204 (2020). https://doi.org/10.1007/s11263-019-01182-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11263-019-01182-4